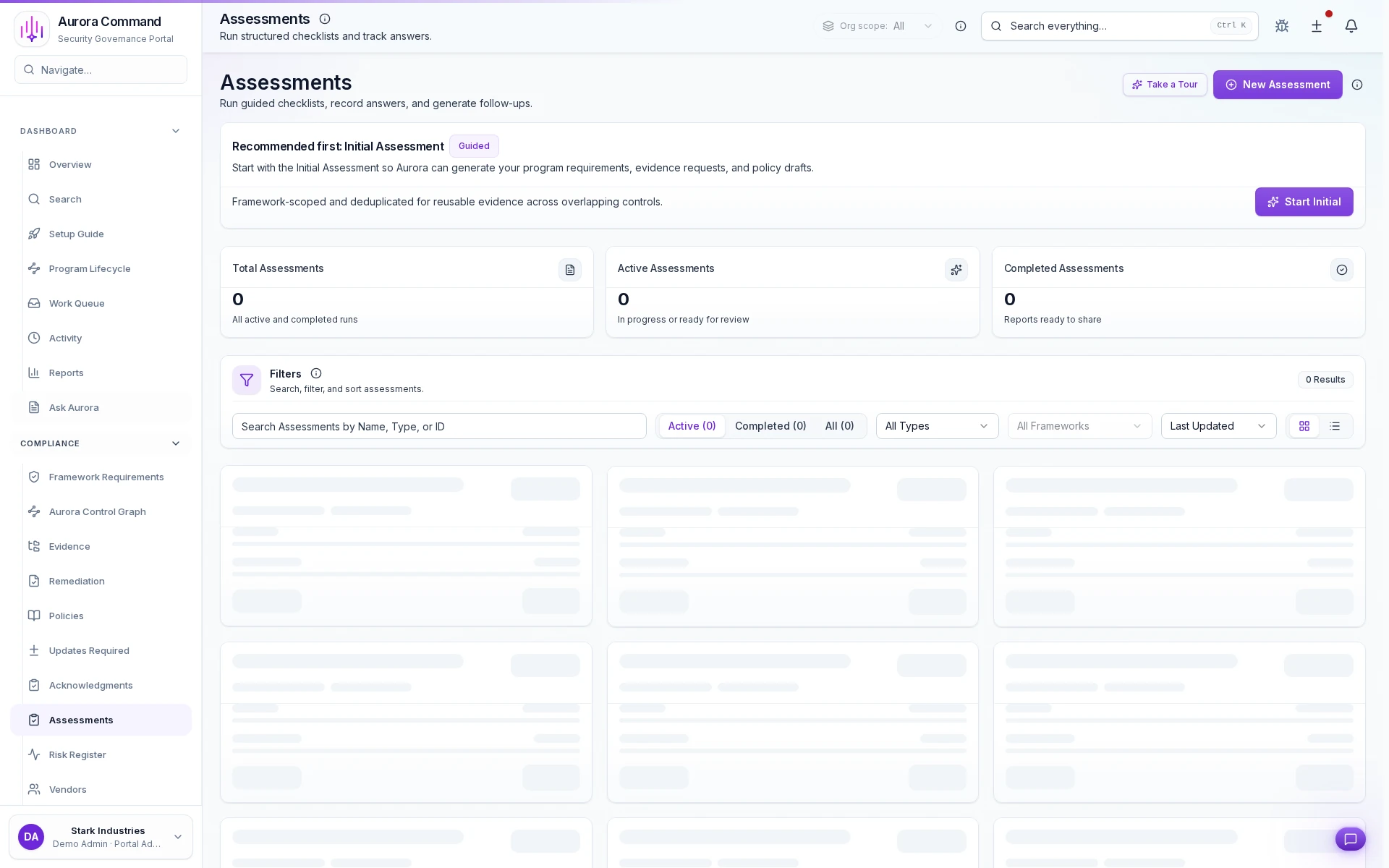

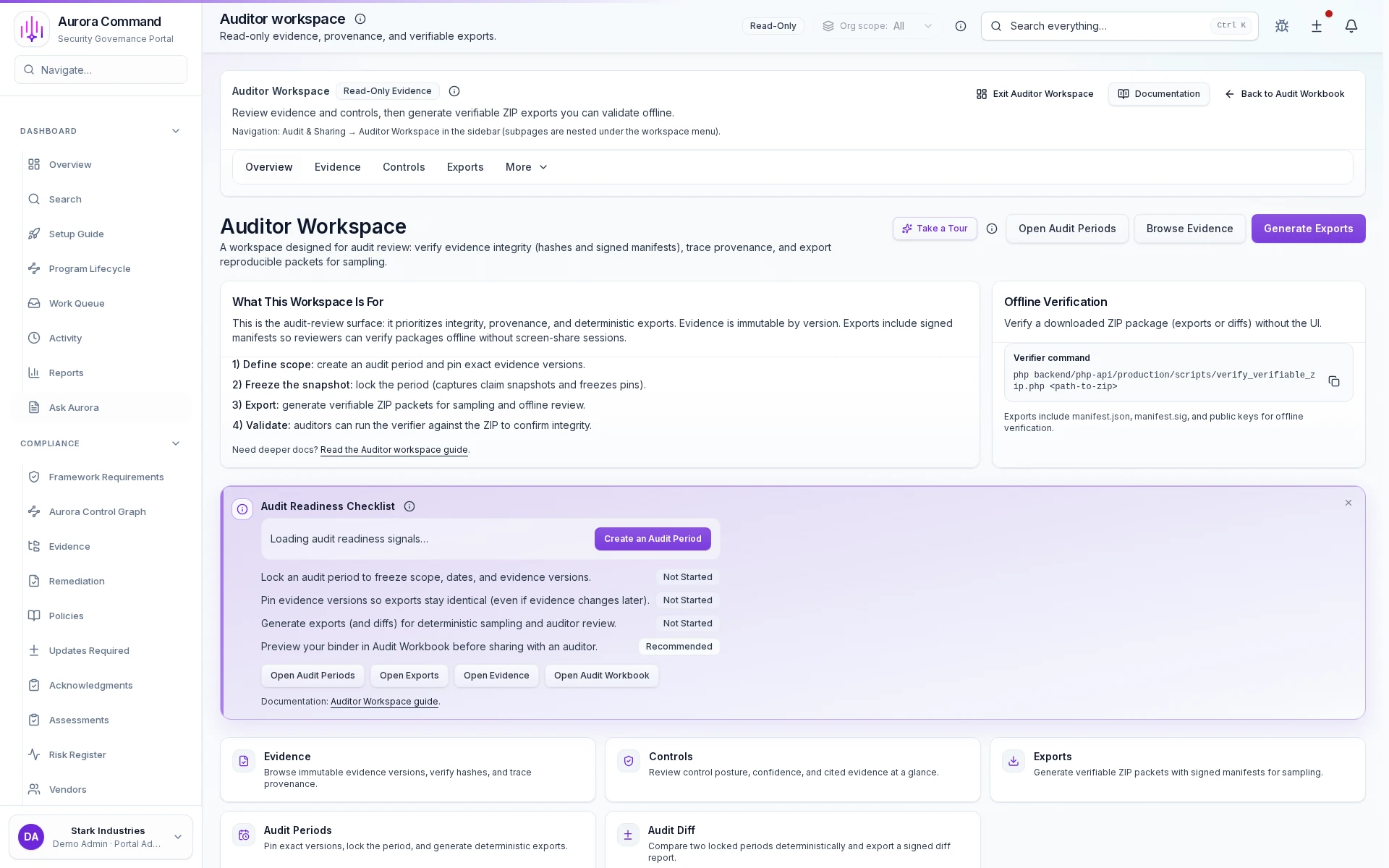

Assessments & Aurora Copilot

AI-Cited Drafts. Human-Approved Answers. Controlled Delivery.

Aurora Copilot drafts assessment responses from your approved answer library with inline evidence citations. Your team reviews and refines instead of rebuilding from scratch, then delivers through controlled, expiring links instead of email attachments.

- Copilot-drafted answers:AI-cited drafts from your approved answer library, refined by human reviewers

- Evidence-linked citations:Every response traces back to version-tracked proof artifacts

- Controlled reviewer sharing:Completed assessments delivered through expiring, logged, tiered links

- Multi-step approval chains:Sensitive language reviewed by legal, security, and compliance before delivery

Sample output

Cited Response Set

Completed answers with inline evidence citations and direct links to every supporting artifact.

Results That Speak for Themselves

Source-grounded

Copilot-Drafted Responses

Aurora Copilot surfaces cited, pre-approved language for every question so teams start from reviewed material instead of rebuilding from scratch.

Zero

Uncontrolled Attachments

Every completed assessment ships through expiring, logged links with tiered access controls. No more spreadsheets forwarded into the wild.

Review-ready

From Question to Cited Draft

Copilot matches incoming questions to your approved answer library and attaches evidence citations automatically. Reviewers verify, not draft.

From Intake to Controlled Delivery

1

Import and triage

Upload buyer or auditor questionnaires, auto-detect framework coverage, and assign section owners in one intake flow

2

Copilot-draft with cited language

Aurora Copilot matches each question to approved answers and attaches evidence citations automatically

3

Route for approval

Send high-risk or sensitive responses through legal, security, or compliance review with tracked sign-off

4

Deliver through controlled access

Share completed assessments via expiring, logged links with tiered permissions and download tracking

Manual Questionnaire Grind vs. Copilot-Accelerated Workflow

Without Aurora

- A 200-question security review arrives Friday afternoon. Your team spends the weekend copying answers from last quarter's spreadsheet, hoping nothing changed.

- Answers reference policies that were updated two months ago, but nobody notices until the reviewer flags the discrepancy.

- Legal asks to review three sensitive answers, so someone pastes them into a Slack thread with no record of who approved the final wording.

- Completed questionnaires get emailed as Excel attachments. Within a week, three people have forwarded them to contacts you never authorized.

- Your SOC 2 evidence was refreshed last month, but the customer-facing answers still cite the old version. Nobody knows until audit season.

With Aurora

- Aurora Copilot drafts cited responses from your approved answer library. Your team reviews and refines instead of starting from zero.

- Every response cites specific, version-tracked evidence artifacts. Reviewers click through to the source and see exactly what backs each claim.

- Multi-step approval workflows route high-risk language through legal, security, and compliance with a tamper-proof chain of who reviewed and signed off.

- Share through Trust Center with tiered viewer access, expiring links, watermarking, and a full log of every download and view.

- Freshness tracking flags every answer whose underlying evidence has changed, expired, or been replaced, before you send it out again.

The Complete AI-Accelerated Assessment Engine

Intelligent Questionnaire Intake

Import questionnaires from any format, auto-detect framework alignment, tag by scope, and assign section owners. Every question is tracked from arrival to delivery.

Approved Answer Library

Build a curated library of reviewed, approved responses. Copilot draws from this library first, ensuring every draft starts from language your team has already vetted.

Copilot Cited Drafting

Aurora Copilot matches incoming questions to approved answers and attaches evidence citations in one pass. Human reviewers refine and approve, never draft from scratch.

Evidence-Linked Citations

Every response references specific, version-tracked artifacts. Reviewers see the proof behind each answer and click through to the source document.

Multi-Step Approval Chains

Route sensitive or high-risk language through legal, security, or compliance review with configurable approval gates and a tamper-proof sign-off record.

Controlled Assessment Delivery

Share completed assessments through tiered, expiring links with watermarking, access logs, and download tracking. Spreadsheet attachments stay off email.

Artifacts reviewers recognize, plus sample previews of structure.

Scroll for artifact previews

Connects to Your Stack

Questions Teams Ask About Assessments and Aurora Copilot

Let Copilot Draft Your Next Questionnaire With Review Control

Share a real questionnaire. We will show how Aurora Copilot drafts cited responses from approved language, routes them through your approval chain, and delivers the finished assessment through controlled, expiring links.

Share one request and we will show the path to cited response set without losing approvals, ownership, or reviewer context.